Spotting fakes: How do non-experts approach deepfake video detection?

Table of contents

Bibliographic information

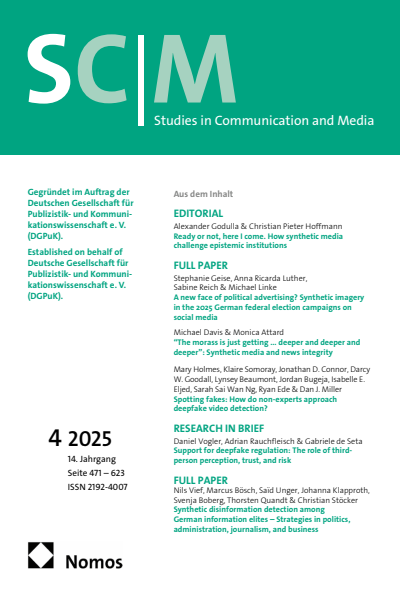

SCM Studies in Communication and Media

Volume 14 (2025), Issue 4

- Authors:

- | | | | | | | | | | | | | | | | | | | | | | | | | | |

- Publisher

- Nomos, Baden-Baden

- Copyright Year

- 2026

- ISSN-Online

- 2192-4007

- ISSN-Print

- 2192-4007

Chapter information

Volume 14 (2025), Issue 4

Spotting fakes: How do non-experts approach deepfake video detection?

- Authors:

- | | | | | | | | |

- ISSN-Print

- 2192-4007

- ISSN-Online

- 2192-4007

- Preview:

Intervening to bolster human detection of deepfakes has proven difficult. Little is known about the behavioural strategies people employ when attempting to detect deepfakes. This paper reports two studies in which non-experts completed a deepfake detection task. As part of the task, participants were presented with a series of short videos – half of which were deepfakes – and asked to categorise each video as either deepfake or authentic. In Study 1 (N = 391), an online study, participants were randomly assigned to a control or intervention group (in which they received a list of detection strategies before the detection task). After the detection task, participants elaborated on the approach they employed during the task. In Study 2 (N = 32), a laboratory-based study, participants’ gaze behaviour (fixations and saccades) was recorded during the detection task. No detection strategies were provided to Study 2 participants. Consistent with prior research, Study 1 participants showed modest detection accuracy (M = .61, SD = .14) – only somewhat above chance levels (.50) – with no difference between the intervention and control groups. However, content analysis of participants’ self-reports revealed that the intervention successfully shifted participants’ attention toward cues such as skin texture and facial movements, while the control group more frequently reported relying on intuition (gut feeling) and features such as body language. Study 2 found similar levels of detection accuracy (M = .65, SD = .20). Participants focused their gaze primarily on the eyes and mouth rather than the body, showing a slight preference for the eyes over the mouth. No differences in gaze were found between authentic and deepfake videos or between correctly and incorrectly categorised videos. The findings suggest interventions can modify detection behaviours (even without improving accuracy). Future interventions may benefit from directing attention from the eyes toward more diagnostic features, such as face–body inconsistencies and the face boundary.

Bibliography

No match found. Try another term.

- Abbas, F., & Taeihagh, A. (2024). Unmasking deepfakes: A systematic review of deepfake detection and generation techniques using artificial intelligence. Expert Systems with Applications, 252. https://doi.org/10.1016/j.eswa.2024.124260 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Brams, S., Ziv, G., Levin, O., Spitz, J., Wagemans, J., Williams, A. M., & Helsen, W. F. (2019). The relationship between gaze behavior, expertise, and performance: A systematic review. Psychological Bulletin, 145(10), 980–1027. https://doi.org/10.1037/bul0000207 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Bray, S. D., Johnson, S. D., & Kleinberg, B. (2023). Testing human ability to detect ‘deepfake’ images of human faces. Journal of Cybersecurity, 9(1). https://doi.org/10.1093/cybsec/tyad011 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Burla, L., Knierim, B., Barth, J., Liewald, K., Duetz, M., & Abel, T. (2008). From text to codings: Intercoder reliability assessment in qualitative content analysis. Nursing Research, 57(2), 113–117. https://doi.org/10.1097/01.NNR.0000313482.33917.7d Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Caporusso, N., Zhang, K., & Carlson, G. (2020). Using eye-tracking to study the authenticity of images produced by generative adversarial networks. 2020 International Conference on Electrical, Communication, and Computer Engineering (ICECCE), 1–6. https://doi.org/10.1109/ICECCE49384.2020.9179472 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Cartella, G., Cuculo, V., Cornia, M., & Cucchiara, R. (2024). Unveiling the truth: Exploring human gaze patterns in fake images. IEEE Signal Processing Letters, 1–5. https://doi.org/10.1109/LSP.2024.3375288 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Diel, A., Lalgi, T., Schröter, I. C., MacDorman, K. F., Teufel, M., & Bäuerle, A. (2024). Human performance in detecting deepfakes: A systematic review and meta-analysis of 56 papers. Computers in Human Behavior Reports, 16. https://doi.org/10.1016/j.chbr.2024.100538 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Diel, A., Teufel, M., & Bäuerle, A. (2024). Inability to detect deepfakes: Deepfake detection training improves detection accuracy, but increases emotional distress and reduces self-efficacy. OSF. https://doi.org/10.31219/osf.io/muwnj Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Dolhansky, B., Bitton, J., Pflaum, B., Lu, J., Howes, R., Wang, M., & Cristian Canton Ferrer. (2020). The DeepFake Detection Challenge (DFDC) Dataset. arXiv. https://doi.org/10.48550/arxiv.2006.07397 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Dornbusch, A., Tye, T., Somoray, K., & Miller, D. J. (2025). Third person effects and the base-rate fallacy: Cognitive biases in deepfake detection [Manuscript in preparation]. Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- El Mokadem, S. S. (2023). The effect of media literacy on misinformation and deep fake video detection. Arab Media & Society, 35, 53–78. https://www.arabmediasociety.com/ Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Flynn, A., Powell, A., Scott, A. J., & Cama, E. (2022). Deepfakes and digitally altered imagery abuse: A cross-country exploration of an emerging form of image-based sexual abuse. The British Journal of Criminology, 62(6), 1341–1358. https://doi.org/10.1093/bjc/azab111 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Godulla, A., Hoffmann, C. P., & Seibert, D. (2021). Dealing with deepfakes: An interdisciplinary examination of the state of research and implications for communication studies. Studies in Communication and Media, 10(1), 72–96. https://doi.org/10.5771/2192-4007-2021-1-72 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Gupta, P., Chugh, K., Dhall, A., & Subramanian, R. (2020). The eyes know it: FakeET- An eye-tracking database to understand deepfake perception. Proceedings of the 2020 International Conference on Multimodal Interaction, 519–527. https://doi.org/10.1145/ 3382507.3418857 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Köbis, N, C., Doležalová, B., & Soraperra., I. (2021). Fooled twice: People cannot detect deepfakes but think they can. iScience, 24(11). https://doi.org/10.2139/ssrn.3832978 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Kramer, R. S., Mireku, M. O., Flack, T. R., & Ritchie, K. L. (2019). Face morphing attacks: Investigating detection with humans and computers. Cognitive Research: Principles and Implications, 4(1). https://doi.org/10.1186/s41235-019-0181-4 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- McMahon, L., Kleinman, Z., & Subramanian, C. (2025, January 8). Facebook and Instagram get rid of fact checkers. BBC News. https://www.bbc.com/news/articles/cly74mpy8klo Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Miller, D. J., Somoray, K., & Stevens, H. (2025). A shallow history of deepfakes. SSRN. http://dx.doi.org/10.2139/ssrn.5130379 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Ng, Y. L. (2023). An error management approach to perceived fakeness of deepfakes: The moderating role of perceived deepfake targeted politicians’ personality characteristics. Current Psychology, 42, 25658–25669. https://doi.org/10.1007/s12144-022-03621-x Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Robertson, D. J., Mungall, A., Watson, D. G., Wade, K. A., Nightingale, S. J., & Butler, S. (2018). Detecting morphed passport photos: A training and individual differences approach. Cognitive Research: Principles and Implications, 3. https://doi.org/10.1186/s41235-018-0113-8 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Senju, A., Vernetti, A., Kikuchi, Y., Akechi, H., Hasegawa, T., & Johnson, M. H. (2013). Cultural background modulates how we look at other persons’ gaze. International Journal of Behavioral Development, 37(2), 131–136. https://doi.org/10.1177/0165025412465360 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Silva, S. H., Bethany, M., Votto, A. M., Scarff, I. H., Beebe, N., & Najafirad, P. (2022). Deepfake forensics analysis: An explainable hierarchical ensemble of weakly supervised models. Forensic Science International: Synergy, 4. https://doi.org/10.1016/j.fsisyn.2022.100217 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Somoray, K., & Miller, D. J. (2023). Providing detection strategies to improve human detection of deepfakes: An experimental study. Computers in Human Behavior, 149. https://doi.org/10.1016/j.chb.2023.107917 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Somoray, K., Miller, D. J., & Holmes, M. (2025). Human performance in deepfake detection: A systematic review. Human Behavior and Emerging Technologies, 2025. https://doi.org/10.1155/hbe2/1833228 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Smith, H., & Mansted, K. (2020). Weaponised deep fakes: National security and democracy [Policy brief]. Australian Strategic Policy Institute. https://www.aspi.org.au/report/weaponised-deep-fakes Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Sütterlin, S., Ask, T. F., Mägerle, S., Glöckler, S., Wolf, L., Schray, J., Chandi, A., Bursac, T., Khodabakhsh, A., Know, B. J., Canham, M., & Lugo, R. G. (2023). Individual deep fake recognition skills are affected by viewer’s political orientation, agreement with content and device used. In D. D. Schmorrow & C. M. Fidopiastis (Eds.), Augmented Cognition: 17th International Conference, Held as Part of the 25th HCI International Conference, Copenhagen, Denmark, Proceedings: Vol. 14019 (pp. 269–284). Springer, Cham. https://doi.org/10.1007/978-3-031-35017-7_18 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Tahir, R., Batool, B., Jamshed, H., Jameel, M., Anwar, M., Ahmed, F., Zaffar, M. A., & Zaffar, M. F. (2021). Seeing is believing: Exploring perceptual differences in deepfake videos. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, 1–16. https://doi.org/10.1145/3411764.3445699 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Thaw, N. N., July, T., Wai, A. N., Goh, D. H. L., & Chua, A. Y. (2020). Is it real? A study on detecting deepfake videos. Proceedings of the Association for Information Science and Technology, 57(1). https://doi.org/10.1002/pra2.366 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Wöhler, L., Zembaty, M., Castillo, S., & Magnor, M. (2021). Towards understanding perceptual differences between genuine and face-swapped videos. Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, 1–13. https://doi.org/10.1145/3411764.3445627 Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- World Economic Forum (2024). The Global Risks Report 2024. https://www3.weforum.org/docs/WEF_The_Global_Risks_Report_2024.pdf Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550

- Open Google Scholar DOI: 10.5771/2192-4007-2025-4-550